I have read dozens of useless articles on using AI as a product manager, so you don’t have to. Here is all they got wrong and what to do instead. If you want to understand the underlying principles first, check out my article on how to solve business problems with AI.

Table of Contents

- Don’t Outsource Research to AI

- Don’t Outsource Thinking and Decision-making to AI

- How Can You Use AI as a Product Manager?

Remember, automating a bad idea doesn’t make it a good idea. It just lets you do stupid things faster.

Don’t Outsource Research to AI

Have you seen prompts such as: “I am creating a X. Can you help me with a list of competitors in this space, their value propositions, and their weaknesses?” Try the prompt with your product. Is the response really useful? Did AI generate anything but a generic list of weaknesses? The prompt works slightly better with the value proposition, but it’s superfluous research based on the competitor’s product website. You could do the same in 15 minutes. Congratulations, you saved 15 minutes of your time and got a list you won’t ever read again.

Knowing your competition is 101 of product management. Asking AI to create a summary won’t help you in anything. You should try their products, use their support, and even their refund process. Everything that annoyed you is a potential opportunity for your product. AI won’t find those opportunities (yet?).

What if we asked AI to estimate the total addressable market? What would need to happen for the answer to be accurate? The source data on which the model was trained would need to be correct and up-to-date, the model would need to memorize the numbers correctly, and the model would need to extrapolate accurately from the learned data. That is a lot of ifs. Are you willing to take a risk with one of the most essential information for your product?

Maybe we can ask AI to pretend to be a user and ask you questions about the product you are building to reveal the missing parts. It will be as helpful as the user personas you create in the safety of your office without talking to any real person.

Don’t Outsource Thinking and Decision-making to AI

You have done the actual research and written some notes. Now, you need to describe the requirements for your product. Why don’t you pass all your notes to AI and ask AI to draft the Product Requirement Document? Just a draft! You will edit the document later. Is that ok? I don’t think so. Of course, generating a draft would save a lot of effort, but… the draft will influence your thinking. Generating the first version of a document looks like a helpful idea, but seeing the automated response will limit what you would think about later. The draft will bias you.

However, generating the requirements isn’t the worst idea. You could start by writing the requirements yourself and, later, ask AI to find missing requirements based on what you have written and the notes you took while doing the research. Don’t accept everything AI produces. Consider whether the idea makes any sense or whether it’s a duplicate of something you already have.

I saw people generating user stories from feature descriptions. That’s preposterous. Shouldn’t it be the other way around? You have a user story because the user has some intent and needs to achieve a goal. You write a description of a feature to help them achieve their goal. I have seen way too many product managers who came up with feature ideas and tried to write made-up user stories to prove someone actually wants those features. Now, they are going to automate this backward process.

I don’t find anything wrong with using AI as a brainstorming buddy as long as you do it after you have done your brainstorming. AI can help you find new ideas but can’t replace your thinking. Also, you don’t want to be influenced by AI-generated ideas.

Let’s generate success metrics with AI! Don’t do it. Do you really need AI to generate the same generic list of metrics as everybody else? MAU, DAU, growth rate, retention rate, session duration, number of sessions per a time window, ad conversion rate, etc. Here is your list.

How Can You Use AI as a Product Manager?

What to do instead? Use AI for what LLMs do best, such as processing large amounts of text or generating text (without expecting ground-breaking creativity).

Finding Common Topics in User Feedback

AI can easily find the topics people complain about if you have a large volume of user feedback or reviews. Of course, AI can also find what people love about your product, so you can use those quotes in your marketing materials. How do we do it? If you can code or you are willing to use AI to generate code, you can take a look at my article about using AI for topic modeling. If not, the easiest way would be to craft a clever prompt asking AI to detect topics in multiple reviews at once.

For example, if I wanted to extract the complaints from reviews of a particularly bad Australian barber I found on Google Maps, I could send the following prompt to AI:

Given a list of customer reviews, find the topic people complain about in each review and return a table containing the review number and the topic. If the review is positive or neutral, leave the table row empty.

---

1. I went to this barber shop because of all the great reviews but after i left i questioned wether they were even real reviews or not. The staff were reasonably friendly and welcoming but the haircut was probably the worst I've ever had.

When i sat in the chair i asked for a burst fade but the barber didnt know what this was so i just asked for skin fade but leave some length on the back and just a tiny bit off the top to tidy it up.

---

---

2. Left the shop looking like a bold lizard. The few times I’ve been here they have always managed to do the opposite of what I asked for. The service was nice but not a good service especially for the price.

---

---

3. Ignorant, un educated, real bad energy, bad haircut. Bad bad bad

---

---

4. Cash only!? It's 2019 dude.

---

---

5. Have being here before, good place.

Went there today and 40 minutes before closing they weren't taking any more customers already

---

I think ChatGPT did a decent job.

| Review Number | Complaint Topic |

|---------------|-----------------|

| 1 | Poor haircut quality, lack of style knowledge |

| 2 | Haircut not as requested, poor value for the price |

| 3 | Negative staff attitude, poor haircut quality |

| 4 | Payment method limitations (cash only) |

| 5 | - |

Ad-hoc Data Analysis

You can use AI for ad-hoc data analysis instead of constantly bothering your data scientist. After all, ChatGPT can generate and run Python code. If you give AI input data as files, AI will read the file, create the code to do whatever you asked the model to do, and generate a chart. If you can’t send your data to ChatGPT, you can use AI to write the code and run the code on your computer.

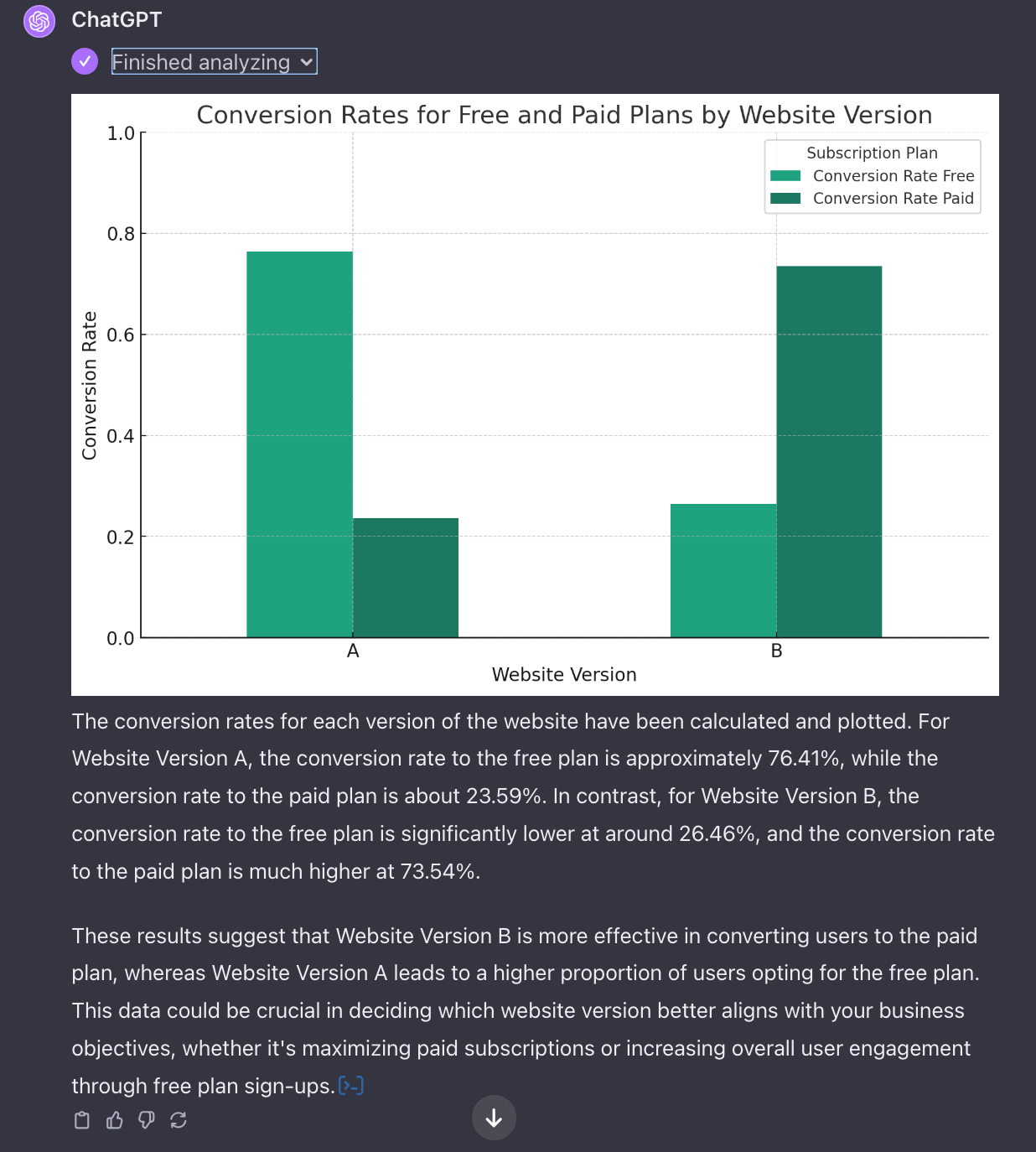

Suppose you have two datasets containing the number of users who signed up for your product and those who paid for a subscription. In the dataset, you have information regarding which version of the payment plan website they saw while choosing between a free or paid plan. You want to know whether the new website version increased the number of users who paid for a subscription. You can ask AI to generate the code to calculate the conversion rate for each website version and plot the results.

I uploaded a CSV file with the number of subscriptions and users with the free plan on a given day (with a column indicating the A/B version of the website) and asked the following prompt:

Given clickstream data containing the date, A/B version of the website, and the number of users who signed up for a free plan and a paid plan, calculate the conversion rate for each version of the website and plot the results.

In general, use AI for the things you cannot do, like writing code or processing large amounts of text. If sending data to ChatGPT worries you, you can ask your IT team to deploy a private HuggingChat instance with the AI model of your choice running on your servers. Currently, open-source models won’t be as good as ChatGPT, but I guess it’s better than nothing.

AI as an Intelectual Sparring Partner

Do you have a brilliant idea? It would be painful if you shared the idea with your team and they shredded your creation into pieces, showing you the idea wasn’t as good as you thought. Fortunately, we can use AI to do the same without looking bad in front of other people. We don’t do it to feel terrible but to improve the ideas before we share them.

How do you test your ideas before sharing them? Have you heard of Socratic questioning? Let me demonstrate the process using a website idea that got popular lately (No, I’m not making fun of people building a clone of a website that is already a clone of another website. Maybe a little bit).

Use Socratic questioning to improve the idea I have and help me understand whether it makes sense:

I want to build a website with lists of custom AI models created by the community. We call them GPTs. I have seen dozens of such websites already, and I think I should build one, too.

In hindsight, I should have told AI to ask those questions one by one.

What to do with the questions? Answer them. Obviously. You can also type your answers into the chat and get another set of questions. For example, questioning the assumptions you made while answering the questions.

Go From AI Janitor to AI Architect

Stop debugging unpredictable AI systems. I can help you build, measure, and deploy reliable, production-grade AI applications that don't hallucinate.

Message me on LinkedIn